We present a comprehensive overview of bert dodson keys to drawing with imagination. This comprehensive guide covers the essential aspects and latest developments within the field.

bert dodson keys to drawing with imagination remains a foundational element in understanding the broader context. Our automated engine has curated the most relevant insights to provide you with a high-level overview.

"bert dodson keys to drawing with imagination represents a significant milestone in our collective understanding of this niche."

Below you will find a curated collection of visual insights and related media gathered for bert dodson keys to drawing with imagination.

Curated Insights

Captured Moments

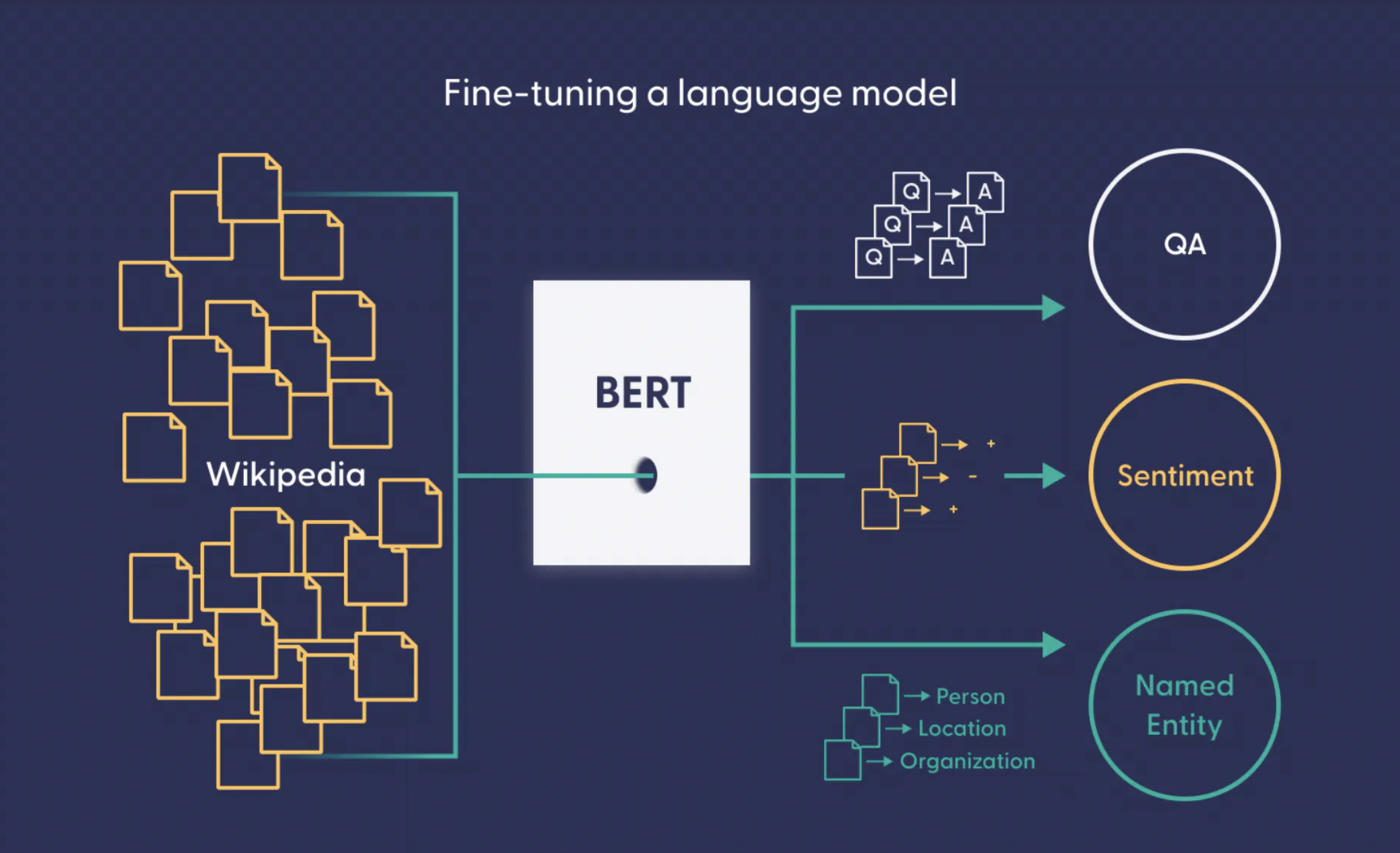

BERT for Multiclass Text Classification using Transformers and PyTorch ...

How BERT NLP Optimization Model Works

Demystifying Language Models: The Case of BERT’s Usage in Solving ...

How BERT NLP Optimization Model Works

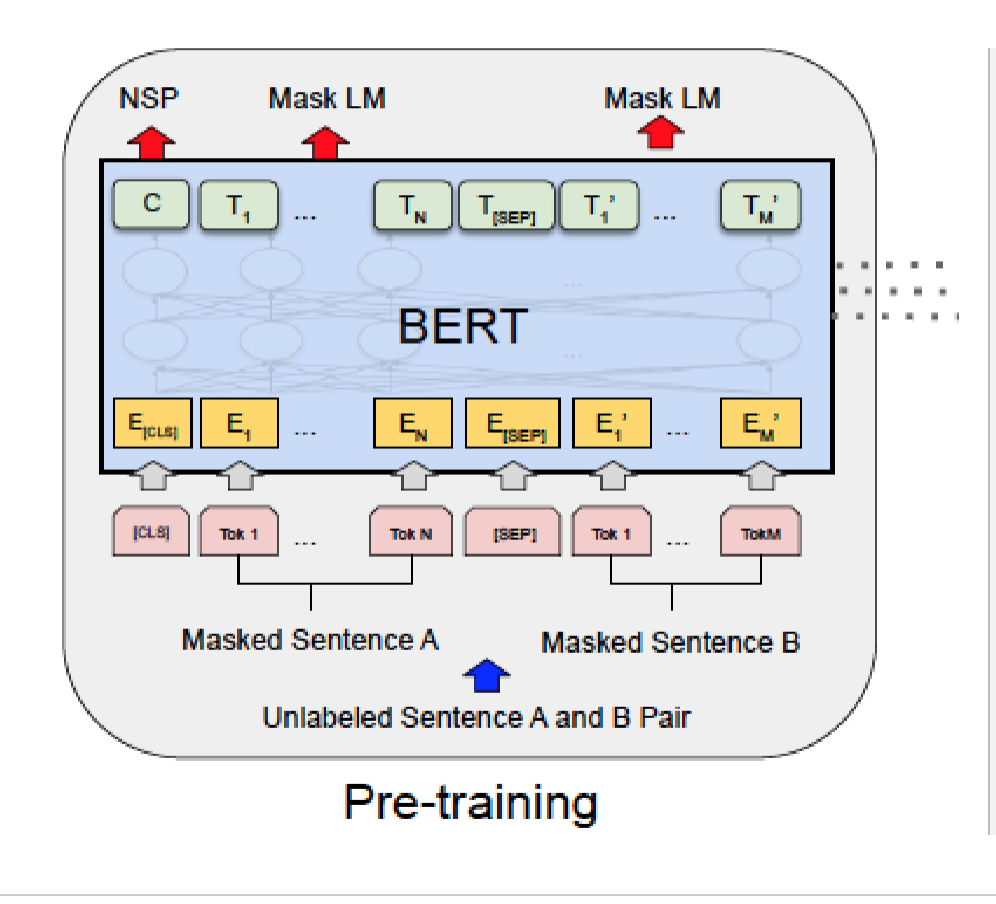

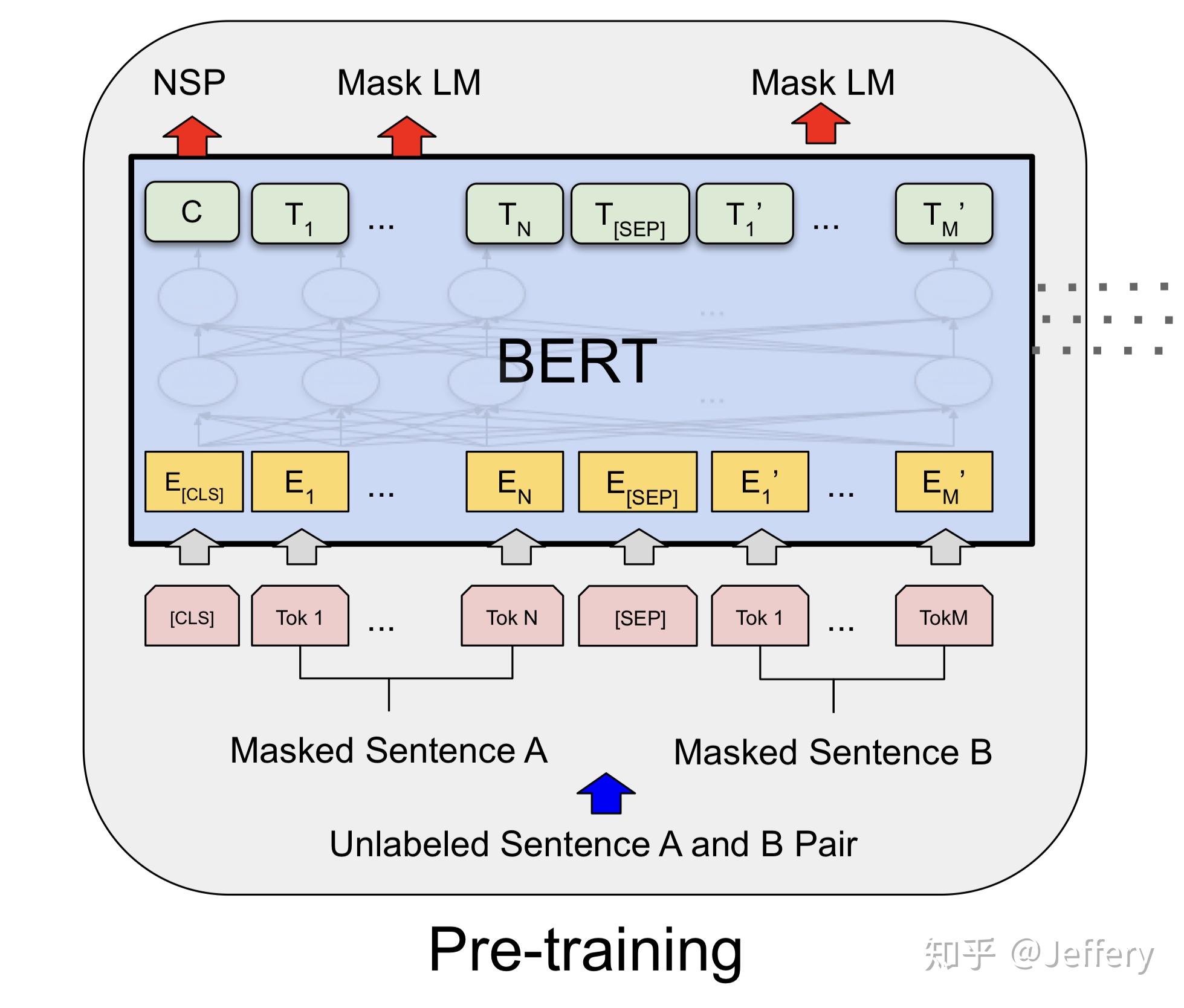

BERT model architecture | Download Scientific Diagram

Bert Bidirectional Model – BERT: Bidirectional Encoder Representations ...

The structure of BERT. The language model of Bert is built by ...

BERT masked language modeling flow diagram | Download Scientific Diagram

Complete guide to building a text classification model using BERT | by ...

什么是BERT? - 知乎